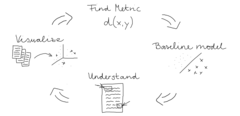

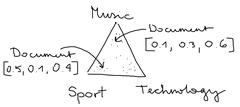

May 12, 2022How to add new tokens to huggingface transformers vocabularyIn this short article, you’ll learn how to add new tokens to the vocabulary of a huggingface transformer model.

In this short article, you’ll learn how to add new tokens to the vocabulary of a huggingface transformer model.